2 AM AI Talks: Why People Are Turning to AI for Emotional Support

Why are people turning to AI for emotional support at 2 AM? Explore the rise of AI emotional conversations, mental health accessibility, and changing expectations around emotional support.

Wulan

5/9/20266 min read

The Rise of 2 AM AI Talks

Recently, 2 AM AI talks have quietly become a phenomenon.

Driven by work exhaustion, family pressure, relationship problems, restless thoughts, loneliness, and insomnia, more people are turning to AI chatbots late at night. They type things they may not feel comfortable saying out loud elsewhere:

“I feel like my life is falling apart.”

What begins as a single line of emotional release often turns into a late-night conversation about anxiety, burnout, overthinking, emotional exhaustion, or the quiet fear that something feels wrong internally.

Eventually, for some people, these 2 AM AI talks become a ritual.

Not because they believe AI is human.

But because, strangely enough, it feels emotionally easier to talk to.

Over the past year, this behavior has become increasingly common online. People are no longer using AI only for productivity or information.

Increasingly, they are using AI chatbots for emotional processing, to vent, reflect, seek reassurance, or simply feel heard.

AI chatbots are increasingly being used for emotional support, stress relief, and late-night mental health conversations. While they are not replacements for therapy, many people are turning to AI to process emotions, navigate anxiety, and cope with burnout or loneliness.

The interesting question is not whether AI can replace therapists. Most people already understand that it cannot.

The more important question is why so many people emotionally open up to AI in the first place.

Mental Health Awareness Has Changed

Part of the answer may lie in changing attitudes toward mental health itself.

Compared to older generations, emotional struggles today are discussed far more openly. Anxiety, burnout, emotional exhaustion, and therapy have become part of mainstream conversation, especially among younger generations who are generally more comfortable acknowledging psychological stress instead of suppressing it silently.

However, awareness does not always translate into professional help.

Sometimes people are not seeking therapy at all. Sometimes they simply want a space to release thoughts, process emotions, or make sense of what they are feeling without pressure or fear of judgment.

And increasingly, many people are turning to AI to facilitate that emotional processing.

Not necessarily because they believe AI understands them better than humans, but because it offers something psychologically important: a space that feels low-risk, instantly available, and easy to open up to.

Why AI Feels Emotionally Safe

Psychologically, this connects closely to the idea of perceived psychological safety. This refers to environments where individuals feel safe expressing thoughts and emotions without fear of embarrassment, rejection, or criticism.

Traditionally, psychological safety is associated with therapy, relationships, or supportive social environments. But AI unexpectedly replicates some of these same emotional conditions.

There are no facial reactions.

No awkward silences.

No fear of sounding dramatic.

No pressure to explain emotions perfectly.

Users can rewrite thoughts or express emotions imperfectly without social discomfort.

In many ways, AI feels as private as journaling, but as responsive as counselling.

Behaviorally, this also aligns with what psychologists sometimes describe as low-friction disclosure. People are generally more willing to share vulnerable thoughts when social risk feels lower.

AI removes many barriers to vulnerability:

fear of judgment,

fear of embarrassment,

fear of being misunderstood,

or the emotional exhaustion of explaining oneself repeatedly.

For emotionally overwhelmed individuals, that reduction in friction can feel deeply comforting.

Why the “2 AM” Timing Matters

The timing itself is psychologically important.

Late-night emotional spirals are not random. Research in emotional regulation and behavioral psychology suggests that emotional resilience tends to weaken when people are tired, isolated, overstimulated, or mentally overloaded.

At night, overthinking intensifies while emotional support becomes less accessible.

Friends may be asleep.

Family members may not feel emotionally safe to talk to.

Therapy appointments may still be days away.

Traditional mental healthcare systems are structured around schedules. Emotional distress is not.

AI fills that immediacy gap instantly.

For many people, the appeal is not necessarily clinical advice. It is emotional availability.

The Cost and Accessibility Factor

Accessibility and affordability also play a role.

Unlike treating a short-term illness, mental healthcare often requires ongoing emotional and financial commitment.

Therapy often involves recurring sessions, long waits, and emotional vulnerability.

For many people, AI feels easier because it is immediate, emotionally responsive, available at any hour, and often free or low-cost.

But Is AI Actually a Safe Space?

AI may feel emotionally safe, but feeling safe and being safe are not necessarily the same thing.

While AI can generate comforting responses, it lacks emotional understanding, clinical judgment, and therapeutic responsibility.

There is also the possibility of emotional overreliance. AI conversations can sometimes create reassurance loops by offering constant validation without appropriately challenging unhealthy thinking patterns.

This does not mean AI has no emotional value. In many cases, it may genuinely help people process thoughts, reflect on emotions, or feel less isolated during difficult moments.

But emotional comfort should not automatically be mistaken for therapeutic care.

How This Could Change Mental Healthcare

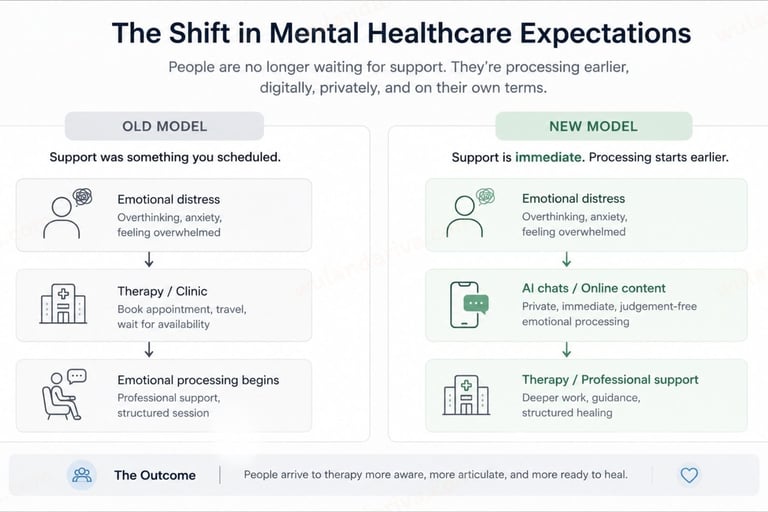

The rise of 2 AM AI talks may reveal something much larger about how emotional support is changing.

AI is changing both emotional processing and expectations around support.

Traditional mental healthcare systems, meanwhile, are often slower, more formal, and structured around appointments.

This creates a growing expectation gap.

People are increasingly becoming accustomed to:

immediate responses,

low-pressure conversations,

emotional accessibility,

conversational interaction,

and emotionally approachable support.

For psychology practices, this may represent less of a technological threat and more of a behavioral shift in patient expectations.

Increasingly, emotional accessibility itself may become part of how patients evaluate mental healthcare.

Emotional Accessibility Is Becoming Part of Care

The First Layer of Trust Now Forms Digitally

Traditionally, psychology practices have focused heavily on clinical expertise, qualifications, and structured treatment pathways.

While these remain essential, emotionally overwhelmed individuals are often evaluating something else long before therapy begins:

“Does this feel emotionally safe enough for me to open up?”

That first layer of trust is increasingly forming digitally, through social content, educational material, and even anonymous AI conversations late at night.

This may push psychology practices to rethink not only treatment itself, but also how emotional safety is communicated before someone even books an appointment.

Because increasingly, emotional readiness is being shaped online long before clinical engagement begins.

Mental Healthcare Communication May Need To Become More Emotionally Specific

Burnout and emotional exhaustion are often communicated generically online, even though different groups experience psychological pressure differently.

As emotional processing increasingly begins digitally, mental healthcare communication may need to become more emotionally specific and emotionally approachable before it becomes clinically educational.

Social Media Content

Mental health content may become more effective when it reflects recognizable emotional experiences, not just clinical

explanations.

Educational Blogs and Articles

Educational content may need to establish emotional recognition before introducing clinical insight.

Outreach Messages

Many psychology practices already conduct outreach across workplaces, schools, NGOs, women’s organizations, and

community groups.

School and educational outreach may need to acknowledge academic pressure, identity struggles, and emotional overwhelm during transitional life stages.

Government and community outreach may need to recognize emotional fatigue linked to responsibility, conflict management, and emotional suppression.

Women-focused outreach may need to approach mental wellbeing through themes such as emotional labor, caregiving pressure, burnout, and invisible responsibilities.

Workplace mental health outreach may require different emotional angles depending on whether burnout comes from deadlines, overperformance, cognitive overload, conflict, or the inability to mentally “switch off.”

Patient-Facing Communication

Even smaller touchpoints such as website wording, front desk communication, or appointment onboarding. may influence

whether a patient feels emotionally safe enough to engage.

Because increasingly, communication itself becomes part of how emotional trust is formed.

Emotional Approachability May Matter Before Clinical Engagement

Increasingly, mental healthcare may need to become emotionally approachable before it becomes clinically approached.

Often, emotionally overwhelmed individuals are first searching for recognition, emotional reassurance, and language that reflects what they are feeling before they are ready for clinical engagement.

This may reshape how psychology practices communicate digitally, through social media, educational content, workplace outreach, blogs, or mental health campaigns.

AI May Become a Bridge, Not a Replacement

This does not necessarily mean AI will replace therapists.

A more realistic future may involve AI functioning as:

a reflection tool,

a between-session support system,

or an emotional bridge that helps individuals become more comfortable seeking professional help.

In that sense, the rise of 2 AM AI talks may not simply be about technology entering mental healthcare.

It may reflect a broader shift in how people seek reassurance, safety, and connection in an overwhelmed world.

The rise of 2 AM AI talks says less about technology itself, and more about how deeply people are searching for emotional safety, understanding, and connection in an increasingly overwhelmed world.

About the Author

Wulan writes about healthcare communication, emotional behavior, and how digital interaction is reshaping patient trust and engagement.

Explore how strategic communication can support patient trust, engagement, and digital positioning in modern healthcare.